April 8, 2020

From September 2014 to September 2016, I spent two years undertaking my research-based Master program at University of Toronto (so that one day I could obtain a MASc. degree), in the Department of Electrical and Computer Engineering’s Communications Group. During this time, as you may have seen from my home page that I focused my areas of research on modern communication systems. Given that it is the year 2020, by “modern” communication systems I would imagine readers think of 5G, which is a topic that has dominated the headlines around the globe. Given the popularity in the discussion of 5G in the world of technology these days, I thought it would be a great opportunity to explain my research to the general public in layman terms and hopefully shed some insight into what 5G is (and isn’t), and how my research contributed, in a very small way, to the advancement of this modern communication network.

The term “5G” is a result of commercial marketing. The “G” stands for the generation (age group, not creation) of Communication Networks. The term “5G” implies but does not stand for the fifth generation. Here’s the truth: in 1979, Japan launched world’s first mobile telephone network that got the ball rolling towards the modern, wireless communication network we experience today. However, the medium used to transmit data relied on analog signals. Digital communication rolled in for the development of GSM (Global System for Mobile Communications) in 1991 in Finland, and believe it or not, with it, emerged the mobile cellular network that we still use today. The transition from the analog system in 1979 to its digital counterpart truly deserved the moniker “generation” in their names, because there were vast differences in what 1G and 2G represented in forming our modern communication networks we use today. However, the ensuing “generations”: 3G, 4G, and now 5G have been nothing more than incremental improvements to GSM and the infrastructure it brought, with the goals that you might expect—making the network faster, more secure, and more reliable. For example, 3G brought CDMA, 4G brought LTE, and 5G seeks to bring mm-Waves (this has been controversial), C-RAN (largely in theory), Massive MIMO, and Heterogeneous Networks (my research—I will discuss in detail later), among other things. Of course, commercially mobile networks are owned by large corporations all over the world that have sought to make big bucks from their monopolies in the airwaves. Therefore, with each incremental improvement, the emerging technologies get blown up to be marketed as a game-changing new “generation” so that those corporations can justify raking in immense profits. Unfortunately, in my opinion, doing so cheapened the idea of calling the evolution of modern communication networks as such in the first place. I can ramble for days about what 5G should be called and how the reality often isn’t what I wanted, but I will just leave it here: 5G is just 2G—Update 3. Until quantum communication is possible, we are not in a new “generation” of modern communication networks.

Okay so if you are still with me so far, you kind of get the gist of what 5G is about: the 2020 version of the cellular network that was introduced way back since the year I was born. However, with all of the media attention on this matter, it might feel that 5G is kind of a big deal—something that surely deserved to be called a game-changer: ending the era of whatever communication systems we have been stuck with and ushering in a new, unknown, and perhaps dangerous territory of technology that resembles the opening of Pandora’s Box? Well, one obvious reason why 5G is in hot discussion is due to bias of recency. This coupled with the fact that we are in the social-media/post-truth era means just about any of planet Earth’s 7.6 Billion denizens can chime in on this subject matter, bringing in opinions, information, and misinformation through a network that ironically is supported by the said network. If in 2010 social media were as prevalent as today, then we will hear about the same things for 4G as we do today with 5G. There is nothing anomalous about it and for you time travelers reading this, you will agree with me for 6G as well.

The elephant in the room is obvious: does 5G give me cancer? To someone researching in the field of 5G, this might be a laughable question that would garner sneers for the asker and have him/her dismissed as a conspiracy starter. However, for me, I want to break that question down, because a) it gives me a chance to explain the architecture of a modern communication network, b) present to the readers the pieces that make up the 5G puzzle, and c) lead into my research. Let me first answer the elephant: no, 5G will not give you cancer. You have a better chance of getting cancer by exposing yourself to infrared radiation (which humans emit based on our body temperature), which is already close to 0%, than getting it from “5G”. Now let’s get back to the breaking down. A communication network is supported by layers, as follows:

The layers support each other from top to bottom, with the media layers responsible for creating the infrastructure to transmit signals in the air and routing to the correct destination, and with the host layers responsible for managing the contents being communicated. The question “does 5G give me cancer?” lies in the Media Layers—the physical layer to be exact. It is during the transmission of raw data over airwaves that causes electron excitations in the antennas that in turn causes propagation of electromagnetic waves (EM waves) over the air. The fear is that one’s cells will not reproduce properly if exposed by the said transmission of EM waves. In particular, the special “waves” that 5G produces scare members of the general public in thinking that they are particularly harmful.

So now let me briefly, briefly, briefly summarize what 5G is about so that I can continue the discussion of what those “waves” are about. Remember what I said way back—5G is another round of improvements to our current cellular network that first emerged in 1991 as “2G”. The definition of “improvements” means to make our modern communication network faster, more secure, and more reliable. The “fast” part is of course the top priority as countless researchers in institution around the world (including me) devised algorithms and optimized mathematical models all for showing how we can transmit more data over fixed time in the limited bandwidth that wireless corporations pay billions to obtain. The “fast” part of the improvement also shaped the technologies that contribute to 5G. As a gross generalization, the technologies can be categorized as four parts:

My research centers around the following formula:

This is the famous Shannon’s Equation, based off his field-breaking work on information theory and its limits. The above equation states that the capacity, or the maximum rate of transmission, of a network channel C is equal to the bandwidth of the channel used for transmission times the log of 1 plus Signal to Interference and Noise Ratio. The latter is the ratio of the signal strength to unwanted signal strength, with unwanted signals being cross talk and background noise. While item 2-4 seeks to improve the SINR of the network through clever design and signal processing, they only improve capacity in log time, whereas item 1 drops the hammer and seeks to improve capacity in linear time by increasing the available bandwidth. You see, the higher the frequency of the EM wave used to transmit data, the higher the bandwidth W, and hence higher the C. Back in 1979, 1G used AM and FM waves which have maximum frequency of 100MHz. Plug this into the wave equation and you will find the wavelength is on the order of meters. We call those “meter waves”. Then, fast forward to GSM all the way up to 4G, the EM waves used are on the order of GHz, which corresponds to wavelengths in the order of centimeters. We call those “centimeter waves”. So, with “mm-waves”, you get the pattern. What 5G proposed, for the physical layer, for the most efficient way of improving “C”, is to amp up “W”, and to do so it uses EM waves with wavelengths on the order of millimeters, or frequencies of over 100 GHz. If you are wondering why we haven’t been doing so earlier, it’s because we can only send very few packets over short distances as higher the frequency of EM waves, the more our atmosphere attenuates the signals. With 5G, the infrastructure was set up to support the propagation of mm-waves. Therefore, it was not a matter of people ignoring mm-waves until now, but rather we have been planning for a good decade and a half to bring mm-waves into the game.

Now back to the elephant again. When people hear about 5G, they undoubtedly hear about mm-waves and how base stations are “closer” to humans so that they can transmit “fast” data to users. The fear stems from the fact that mm-waves is of higher frequency than the cm-waves that we have been using for three decades, and now with those base stations being closer (misinterpretation of item 3) and bigger (generalization of item 4), the concept of “zapping” the general public with this high-frequency wave certainly would garner unpopular opinions, and this is understandable. However, all is not as it seems. First, mm-wave is still making rounds in the standards discussion with corporations and governments around the world, and has not been “approved”, so to speak, by any official agency as the wave to go for 5G. Most of “5G” that you hear with cellular carriers focus on other things, such as infrastructure updates with items 3 and 4 but the raw waves are still cm-waves. If you have been fine with the three decades of enjoying interacting with your cell phones without any issues, then you will be fine now. Second, even if mm-wave becomes the prevalent EM wave for 5G, it is still a radio wave. No one will be “zapped” by radio waves. It is true that higher the frequency of an EM wave, the more carcinogenic it gets, but frequency we are talking about must be at least in the UV range for the radiation to be ionizing. The energy of an EM wave is equal to its frequency times the Planck’s constant, h (Planck–Einstein Equation) and is a result of the photoelectric effect. If you plug in the frequency involved for UV, X-rays, and Gamma rays, then yes, the energy involved will disrupted cellular reproduction. However, mm-waves are still radio waves, which has a lower frequency than infrared, which has a lower frequency than visible light, which has a lower frequency than UV. Also, by “lower”, I mean by orders of magnitudes. Visible light has frequency 1000 times than that of mm-waves. If visible light does not give you cancer, then neither would mm-waves.

***

That is what I wanted to say to the elephant. Now I want to take this chance to get back on my research. As I mentioned, 5G is composed of the following four technologies:

With the aim to improve the following equation:

So that we can have the fastest version of 2G yet. Item 1, which I have already discussed, focuses on improving W and is by far the most effective way of improving C. Items 2-4 improve C by upping SINR, which do so in log time. However, we have seen that trying to improve C via W is both expensive and unfortunately controversial, so, log time it is.

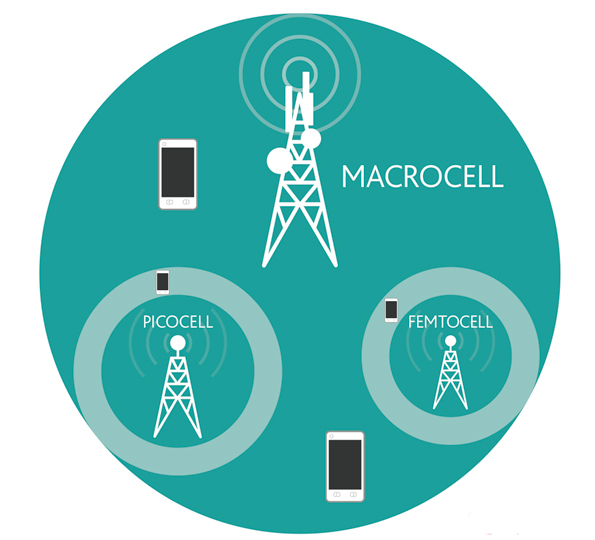

My research is on item 3: heterogeneous networks. This area of 5G is still in the physical layer, and it is about the design of a particular network layout like so:

This is an improvement to the GSM network first introduced in 2G, with user equipment (UE) i.e. smartphones talking with base stations. In the above model, the base stations you and I have been familiar are now the “macrocells”. There is a second tier of “picocells” (and perhaps third tier “femtocells”), collectively called “microcells” corresponding to base stations that are closer to UEs but transmit less power than macrocells. They are placed near the edge of macrocells with the idea that microcells will be able to provide better signal strength to UEs that would otherwise be far apart from the macrocell normal associated. The purpose of the HetNet is to improve SINR in the Shannon Equation and therefore improving C, albeit in log time. Furthermore, microcells provide added benefits in saving the power consumption costs of base stations, as they use up much less power than traditional macrocells. However, there needs work to be done in order to optimize the HetNet.

Signal processing provides a significant role here in making the above HetNet model valuable to 5G. If you recall, the whole point of HetNet is to improve SINR. Microcells serve UEs on macrocell edges in order to improve signal strength of those far apart from their macrocell. Unfortunately, if there are UEs associated with a macrocell, then the macrocell will be “active”. By “active” I mean it will transmit signal to the UEs associated with it. This is all fine and dandy until you realize that signal to an associated UE is an unwanted signal to another unassociated UE—in other words, interference! If you have a situation where a bunch of macrocell-edge UEs are happily associated with a nearby microcell, if the central macrocell is active because it had to serve at least one user, than the microcell UEs will be blasted with interference from the macrocell, and guess what, their SINRs will not improve.

The naïve solution to this problem is via Cellular Range Extension (CRE), which adds a factor to the transmit powers of microcell base stations to “lure” UEs to attract to it instead of macrocells. Unfortunately, this does not work because what if you already have an UE close to a macrocell? Thanks to CRE, that UE will likely be associated with a faraway microcell, which is a double whammy—that unfortunate user will receive an extremely attenuated signal that wasn’t strong to begin with. At the very core, we need better signal processing algorithms to tackle this problem rather than generalizing by adding a common factor to boost microcell’s associability.

Before I discuss my research’s take, I want to introduce the trinity of HetNet management (I will focus on the downlink, i.e. from base stations to UEs, as that was my focus). The HetNet management problem is divided into three parts:

Association is about which base stations talk to which UEs. Scheduling is about with the UEs served by the base station, which UEs get which part of the available resources for each transmission frame. Power control is about how much signal strength should each base station transmit to each user. Remember signal to one user is interference for all other users.

A quick literature survey on HetNet management reveals countless papers discussing the above issues, but mostly fixing one and jointly solving the other two. When you fix power control, you can solve the association problem iteratively using proportional fair scheduling in each step and re-associate until you obtain an optimal solution. When you fix association, similar iteration algorithms exist for power control distribution using proportional fair scheduling. Finally, with scheduling fixed—you guessed it, re-associate and re-calibrate the power.

My research seeks to take on all three parts at once. I want to jointly solve the HetNet management problem. Note that I am not the first one to do it as the joint problem has been formulated many times before and algorithms have been proposed time and time again. My small contribution was to figure out a clever heuristic to maximize the chance that we hit a high optimum point, perhaps even a global optimum. The joint problem is not convex, so we cannot say that we solved the problem once we have a KKT condition-satisfying point. The best way to go about it, in my opinion, was to look at what our model represented and further model what we would do to obtain the optimal solution in a hand-wavy fashion. Then, we turn the hand-waving into math and let the theory of optimization take over.

The hand-waving is simple: we attach a “cost” to each base station. The higher their power consumption, the greater the cost, if the base station is active. If the base station sleeps (i,e, serving no UEs), then its cost is zero. Then, we solve our objective function which is the sum of the network utility minus the total cost of the base stations being active. You might see how this will discourage macrocells from being active, even if it can gobble up users through its sheer signal-strength prowess. This is different from CRE because we don’t just blindly assign a factor to each tier of base stations. We consider the UE placement in the network with respect to their base stations. For example, say we have two UEs very close to a macrocell. In the CRE case, if the factor provided to the microcells are high enough, then both of these UEs will be forced to a faraway microcell for no good reason. In my proposed algorithm, if the network capacity benefits of those UEs being served by a close macrocell outweighs the macrocell going to sleep and the two UEs going elsewhere, then the macrocell will be active serving these two UEs. On the other hand, if the capacity benefit of the macrocell going to sleep outweighs the cost, then we let the macrocell sleep. At the end of the day, math calls the shots. This is especially effective when we have some “undecided” users near the middle of the cell. Without the base station “cost”, those UEs will all but certainly decide to go with macrocells in the center rather than the microcells at the edge, because at the end of the day, macrocells provide a higher transmitted signal power. And if one UE decides to go, all will go, because it takes one UE to make the macrocell active, and then all other UEs will pick between having macrocell’s transmission as signal vs as interference, and you know what they will pick. With my proposed algorithm, we hope that the “cost” will help “turn off” the macrocell as it will have a higher cost than microcells due to its power consumption, and that cost heuristic forces the algorithm, the math, to assign collectively the undecided UEs to an edge microcell. In layman terms, we try to solve a trust issue. Without a cost kicker, no UEs will “trust” others to not go to the macrocell and cause a whole bunch of interference. With the cost, the undecided UEs will be more confident that no one will go to the macrocell in the center because the cost of running it is to high! In fact, the worst deal a macrocell gets is to be associated with one user. One user! Would the macrocell pay the cost of keeping it running just to serve one UE? The math likely will say no unless there is a really compelling reason, and that reason is also going to be in numbers and not in hand-waving.

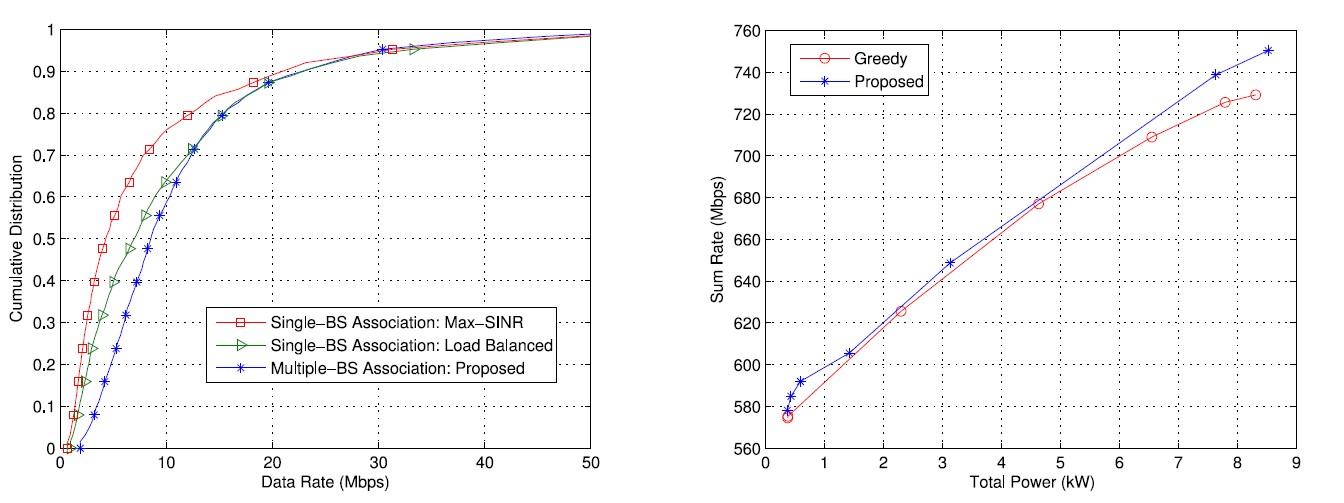

We also don’t want to make any assumptions on scheduling. After all, I’m trying to jointly optimize association, scheduling, and power control. Everything is flexible—even how many base stations can be served by each user! Now, you may think that an UE served by more than one base station is strange, but the idea is that after running the simulations for my model and algorithm, the optimal solution obtained is still that each user is served by one base station. By keeping constraints open and flexible, we can parse more insights from the results we obtain.

So, the problem I’m trying to solve becomes:

Maximizing (Network Utility) – (Cost of Keeping Each Base Station Active)

Subject to:

The last constraint shows the optimization variable we are considering: x. The variable x is actually a 3D matrix detailing the fraction of resources user k spends on base station b in band n. If x = 0, user is not associated. If x > 0, then the user spends a fraction (or all if x = 1) of its resources on base station b for band n. We are also optimizing over p: the transmission power for each base station. Putting these together and using L0-norm optimization, we derive an algorithm to solve the HetNet management problem. The algorithm is nothing novel, but the idea of using the cost function to turn off macrocells and therefore giving a chance to the microcells, however, is, at least in the settings considered anyways.

The proposed algorithm is shown in the above results in blue. I won’t go over the results in detail here as it requires a good amount of math setup. For more details, you can check out my IEEE-Signal Processing Letters paper:

Or my MASc. thesis:

Or the presentation slides:

***

Finally, I just wanted to say to the general public that one lesson I learned from my two years as a research graduate student at University of Toronto is that new technology can be daunting. Researching to develop it is no easy work and getting the general public to accept it is even harder. However, I believe that humanity did not come this far, with the world far different than what it was 1000 years ago, just so we can stop advancing. There will always be a frontier that aspiring humans will conquer—it is in the destiny of our species. I, for one, will forever be proud of my work and I will always strive to stand up for the advancement of humankind. I believe it is time that we stop fearing and cowering over the works of our fellow human beings, but rather start working together, as a species, for our own good.